So you’ve just finished your alpha software product and you are ready to release it to the world to get some feedback. Congratulations. Now you are ready for the next 90% of the software development effort – RAAMPUSS.

In a previous post, I talked about the competing product design centers. One of those design centers is what some pundits call the “-ilities” for the suffix that goes on so many of the categories – realiability, availability. . .

One of the fundamental challenges in new product development is balancing the drive for ever more useful functionality for customers with the need to have a very high quality product. This paper gives an overview of the framework that I created and used at Primus Knowledge Solutions (acquired by Art Technology Group which was acquired by Oracle) to increase the less than stellar quality of our products to make sure that we met the critical requirements of some of the hardest customers to satisfy – those providing world class customer support for their own products.

RAAMPUSS is an acronym that is a short hand for eight aspects of a quality product:

- Reliability

- Availability

- Adminstratability

- Maintainability

- Performance

- Usability

- Scalability

- Security

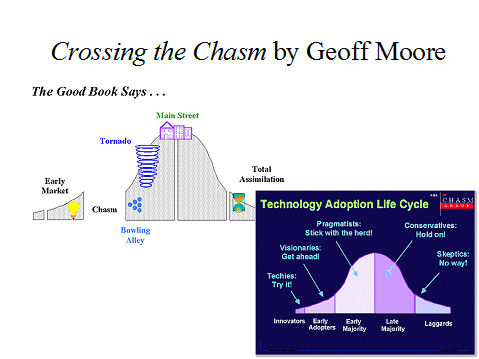

As the volume of the product sales increases and/or the size of customer deals increases, RAAMPUSS becomes more important to the organization than incremental functionality because the product becomes mission critical for the customers. The diagram below looks at the natural progression of a product from initial idea to something that is used between several enterprise scale corporations. During the first three stages, the importance of functionality and will the idea work in a real world setting overwhelm the need for high quality. But once an idea has proven itself in a pilot project, most customers want to leap to enterprise scale deployment.

However, the engineering team is so busy trying to generate functionality and find the suite spot for their product in a market niche, they are ill prepared for the sudden volume and scale demands on their product. Life for the customer and the engineering team becomes quite difficult at this point because it is very hard to reengineer the product for RAAMPUSS while the customer is screaming for immediate action to fix their crashing software. One customer like this would be bad enough but often there are 5-100 customers clamoring for attention with severe problems in multiple parts of the product.

RAAMPUSS can be both a diagnostic tool to assess where problems are in existing products and a development strategy for those just embarking on product development or trying to figure out how to prioritize activities for the next release of a product.

The point at which RAAMPUSS becomes critical in the lifecycle of a product business corresponds with the chasm that Geoff Moore describes in his books. The development process that works to the left side of the chasm no longer works on the right side. Similarly other functions of a company, most particularly the sales function, encounter this same difficulty. Often times the managers that are great for working with early adopters are incapable of developing the right stuff for the early majority and vice versa.

The point at which RAAMPUSS becomes critical in the lifecycle of a product business corresponds with the chasm that Geoff Moore describes in his books. The development process that works to the left side of the chasm no longer works on the right side. Similarly other functions of a company, most particularly the sales function, encounter this same difficulty. Often times the managers that are great for working with early adopters are incapable of developing the right stuff for the early majority and vice versa.

Reliability

Does the product work according to its specification? For the user, reliability means that they can use the product to produce work consistently. The most obvious reliability failure in software is an application or system crash. Less obvious is does the product work the same way each time I use it – both day in and day out as well as through upgrades. Another form of reliability is not corrupting data, losing data, or computing results incorrectly. Another example is when a product does the same thing inconsistently like trying to indent bullets within Microsoft word. The inconsistency is both within a document and from release to release.

Examples of reliability issues Primus had with version 3.1 include the intermittent errors experienced by EMC, Compaq and Novell where the Versant OODB crashed every time the online backup program was run. The regularity of the crashes (1-5 times daily) along with the time it would take to bring the systems back up (EMC client PCs took 30 minutes) led to irate customers. More subtle are the problems related to search where the return results of an indexed solution vary depending on what phrases are used in what order.

The more stable the system, the higher the expectations for increased reliability.

Availability

Closely related to reliability is availability. If something happens to the application, how fast and easy is it to recover? Does it take 20 seconds to reboot (the Microsoft three finger salute) or do I require eight hours to reload a database? When service packs are installed do I have to bring the system down for several days?

In the end availability is about uptime – 7x24x365 times the number of users. Problems with availability can be due to either unscheduled outages as the result of bugs or scheduled outages required to install new software, bring on new users, reindex or convert a database. The goal is for the application to be up all the time.

Availability is also related to investments in hardware to achieve fault tolerance with no single point of failure. While the goal should be for our software to not fail, it is also important for the software to degrade gracefully. Better to lose a single user rather than to lose the whole system bringing all the users down.

Administratability

With complex application software, one of the most expensive activities for a customer is how much time they must spend administering the system. It starts with what is required to install a system. It extends to how difficult it is to reconfigure the different tiers of a system. At the end of the spectrum is how easy or difficult it is to add or subtract a user. Additional items are keeping track of and maintaining dictionaries, reporting on usage, setting security levels, ensuring integrity of the data or knowledge. Where is administration performed – does it occur at the server computer or can it be done remotely? Are log files kept of all changes and/or can the system be easily rolled back to some previous environmental state?

One of the major complaints about Primus eServer products was our inability to administer application functions remotely. A great deal of effort went into eServer 4.0 to provide a Web interface into all ADMIN functions. Another major benefit of eServer 4.0 was the automatic installing of client software or the elimination of it with a more robust Internet Explorer like product.

Maintainability

The essence of maintainability is if something does go wrong with the software how easily can the problem be recognized as a software (versus a hardware) problem, identified as to whether it is ours or some other vendors software, whether the problem located in the code, is the problem really fixed, and is the problem resolution distributed to all those affected by it. Ideally fixes come in three flavors:

- What can be done to get the system back up;

- Workaround to guard against the problem recurring or data being lost/corrupted;

- Permanent work around to ensure that the problem doesn’t happen again.

In parallel with the immediate fixing of the problem is a Root Cause Analysis (RCA) to determine if this is an isolated fault or a symptom of a bigger design or architecture error. The RCA then kicks off the fixing of the problem as well as the fixing of the software development process that allowed the error to creep in. That is, how can our development process be improved so these class of errors never happen again.

Maintainability is primarily about processes and tools. Processes range all the way from the backend – how does a problem manifest itself, how does a customer report it, how does support deal with it, how is the problem escalated into engineering, how is the problem fixed and the fix transmitted back to the customer, and how is the customer communicated to throughout this process. At the front end is how the software is designed and architected, how is it implemented, how is it tested, and how is it delivered to the customer.

During the eServer 3.1 unstable phase, one of the major observations was that our error logging code was of very little use for high priority problems. Thus, one of the key tasks of the Tiger Team created to stabilize 3.1 was to define a set of tools that we could put into eServer 4.0 to increase maintainability.

Performance

For our purposes we will define performance as what the user experiences. While there are many ways to improve the perception of performance it comes down to how long does it take to perform the routine tasks that the individual user works with. While all of us would like the performance to always be instantaneous, we’ve come to expect different levels of performance depending on the specific function. For example, typing response time should be instantaneous (the echoing of characters or clicks). Screen pops should be barely noticeable. Searches of several seconds can be tolerated. Printing can take longer although if very long, the user would like to see the printing become a batch job.

The second aspect of performance is how much the user’s perceived performance varies as environmental conditions change. The ideal is for perceived performance not to change, but some variance is tolerated. Environmental changes that can affect performance are: speed of the hardware (client, server, network), number of users on the system (stand alone, average, peak), size of the database or knowledge base, and complexity of the task. A particularly irritating aspect of performance is if it degrades from release to release. Nothing is worse than a user having to fight a double whammy of new functionality and degraded performance that often comes with new releases. This last aspect was one of the major reasons we focused on performance for the eServer 4.0 release because in previous releases the perceived performance got worse. Early reports indicated that users were quite pleased with performance because it was dramatically improved at the integration point with call tracking software and similar or better search performance was experienced.

Usability

This component focuses on the usability of the product. Today, usability is often referred to in the larger context of User Experience (UX). Typically, this function studies the user interface for good design principles and the excess of things like too many changes of context, too many key clicks, or uncertainty on the users part as to what to do next. While human centered design is more of a front end process looking at the context of the user in the real world, usability looks at the actions of the user in relation to the actual software. As a result, usability is often done at the end of a project when there is a functioning product to work with and to study.

Scalability

We define scalability in terms of the purchaser rather than the user. This function defines how many users can expect reasonable performance given a particular hardware/software environment. Ultimately this translates into the system cost per user. Scalability tests involve running loads against standard configurations for 100, 200, 400, 600 users and so on. The ideal is that for a given performance level there is a specified hardware configuration that can handle the tested number of users.

Security

Almost every day we read in the news of another security breach at a well known company. One of the more recent large security breaches that went on for a longtime was with Sony’s PlayStation Network. Computer and application security involves multiple aspects of protection of information and property from threats like theft, corruption, or natural disaster. For any organization that has personal information or critical data, the recommended process is to have both an inhouse security team as well as contract for external privacy experts who are well-versed in how to “hack” into application systems and databases.

Test Driven Development

As a development manager begins to understand the depth of critical processes necessary to continue to improve one’s RAAMPUSS quality, it is a good time to look at Test Driven Development (TDD) methods. While TDD does not solve all of the world’s ills, it goes a long way towards achieving RAAMPUSS goals.

Pingback: Competing Product Design Centers | On the Way to Somewhere Else

Pingback: Attenex Patterns History – The Critical First Year | On the Way to Somewhere Else

Pingback: Advice to a wannabe entrepreneur | On the Way to Somewhere Else