At dinner the other evening at Crush with my valued all things marketing and branding colleague, Katherine James Schuitemaker, I shared with her that I finally produced a draft of the book on Attenex Patterns I’ve wanted to write for a long time. She patiently listened without interrupting as I energetically talked about the topics and ideas I wanted to highlight.

At dinner the other evening at Crush with my valued all things marketing and branding colleague, Katherine James Schuitemaker, I shared with her that I finally produced a draft of the book on Attenex Patterns I’ve wanted to write for a long time. She patiently listened without interrupting as I energetically talked about the topics and ideas I wanted to highlight.

When I finished and took a deep, expectant breath, I asked “so what do you think?”

Providing the gift that only long time colleagues have permission to do, she looked at me and then said “Skip, that is so old school. You’ve waited so long to publish your first book that the world of book publishing has passed you by. Toss the book idea out and start developing the iPad app that both of us really want.”

While this was not the comment or pat on the back I was looking for, I knew I was about to get something better. So of course I had to ask “what do you think that app looks like?”

Katherine was at her most eloquent, software conceptualization best as she launched with the synthesis of threads we’ve talked about for twenty years since we first met at Aldus (now Adobe). Energized, she leaned across the table and lamented “I am so tired of the linear book. I am so tired of reading books and making notes in them that become completely inaccessible. What I want is to have a tool that is the combination of the two tools we built at Attenex – Structure for authoring and Patterns for making sense of all the reference materials.”

“I want you to provide the same content that you were going to put in your book but now do it in app form. But most importantly, I want that app to be the starting point of what I need. I need to be able to put in a current project that I am working on and have your application point out the gaps between your framework and what I am doing. I don’t want more information in the form of static content. I want dynamic, connected knowledge that is ‘news I can use’ when I need it and in the context of what I need.”

“Skip, you have to go back to your original vision at Attenex of connecting authoring (Structure) with discovering (Patterns). Stop with this book nonsense. This is your legacy that only you can do. The previous forty years are all prelude to preparing you for this killer app.”

Well, she had me know. Legacy. That was really unfair to entice me with the thought of producing a legacy.

While one part of my brain knew that she was on to something important, I couldn’t let go of the idea of writing a book now that I finally had the energy, motivation and stamina to do the writing. With my high tolerance for ambiguity, I looked her straight in the eye “I’m going to be incongruent for a bit. My gut tells me that you are right on. Yet, my analytic brain is fighting your idea something fierce. So I’m going to let my analytic self argue with you for a half hour and then I am going to agree with you and change course in some fundamental ways.”

Katherine was very patient with me for the next half hour as I served up objection after objection. She did her best not to laugh as we’d played this game many times before. Finally, as my “objection energy” ran out, I said “OK. New game. How do we marshal the resources to make it happen?”

As we parted, Katherine turned to me and commanded “Skip, free us from the tyranny of the linear book!”

My test for any good idea is how much energy I have for the idea when I get up the next morning. Based on the frenetic writing that occurred over the next couple of days and the meetings I set up to corral the resources, this idea was clearly the right one. I sent this email message to Katherine the next morning:

Katherine,

I don’t even know where to begin.

You have such a wonderful way of hearing, synthesizing, guiding, shaping and blowing my mind.

I knew there was a reason I’ve been procrastinating in writing the book. The main reason I bought the iPad at launch was in Steve Jobs announcement he talked about the future of the iPad is combining video and books – the Vook. That’s what I wanted to get experience with – to learn how to author a Vook or beyond. I knew it couldn’t be linear, but the 60 years of reading linear books blinded me. Yet, I’ve been disappointed in all the attempts that I’ve seen (the many Vooks I’ve bought), Flipboard, The Daily, and the Business Model Generation iPad app.

Using the iPad for several hours every day has transformed the way I work, play and think. But not far enough. Last night you moved the needle far beyond what I’m experiencing.

As a starting point, I’m attaching where I’ve gotten so far in authoring what I want to say about Patterns for the iPad. After last night this is a start, but there needs to be so much more.

And my mind wouldn’t shut off last night.

In retrospect, the seeds of your insights last night were planted in this memo to the Attenex team. While I kept coming back to these thoughts over the years, I clearly didn’t understand the implications of the last couple of paragraphs even though these ideas spawned the personal patterns work that Eric Robinson and I iterated through. I was blinded by Patterns as a discovery and review tool. Yet all the puzzle pieces are there in Patterns when we added meta-data tagging.

Email Message from Skip to Attenex Staff: August 31, 2001

In life there are little things and big things. In the context of business, August 15, 2001, was a “big thing” day for me.

In 1968 I was fortunate to get a job in a psychophysiology research lab at Duke Medical Center at the start of my sophomore year in college. We ran experiments on human subjects looking at their physiological responses to behavior modification therapies and to different psychiatric drugs. To better deal with experimental control and real time data analysis of EEGs and EKGs, we purchased a Digital Equipment PDP-12 (the big green machine). It had a mammoth 8000 bytes of memory and two pathetic tape drives that held 256,000 bytes of storage.

In 1968 I was fortunate to get a job in a psychophysiology research lab at Duke Medical Center at the start of my sophomore year in college. We ran experiments on human subjects looking at their physiological responses to behavior modification therapies and to different psychiatric drugs. To better deal with experimental control and real time data analysis of EEGs and EKGs, we purchased a Digital Equipment PDP-12 (the big green machine). It had a mammoth 8000 bytes of memory and two pathetic tape drives that held 256,000 bytes of storage.

Embedded in the rack of the computer was a big green CRT which could display wave forms as well as text. A simple teletype device served as the keyboard. While we were controlling the experiments, we displayed in real time the wave forms from the physiological data of the human subjects. We experimented with multi-dimensional displays of EKG vs EEG vs the user task analysis. It was so fun to get lost in “data space.” [A former HCDE student, Denise Bale calls this “dating her data”.]

Along with doing all the programming for the lab experiments, I got to use the machine to play my first computer game (Spacewar). It was so cool being able to control a space ship in the solar system and have it affected by the gravity of the planets on the CRT. There was no mouse at that time, but we used several potentiometers and toggle switches to control the X, Y and Z coordinates along with the firing of guns. Controlling green phosphor objects was a real feat for those of us who have no hand eye coordination.

One semester while procrastinating in writing several term papers, I wrote a text formatting application called Text12 which was modeled on Text360 for the large IBM mainframes of the time. The formatting commands were eerily familiar to the HTML format that we know today. The results of the activity were that I could enter and edit the text of my papers and then print them out on a letter quality device. It eliminated all the messiness of using a manual type writer and white out. Several times at 2am in the morning I hallucinated about the combination of Spacewar, Complex Wave Form Pattern Detection and Text12 to provide the ability to take the electronic texts that I was creating, analyze them and display them in three dimensional spaces by the relatedness of the concepts within the papers. I got carried away thinking of a new document being indexed and “blasting” links throughout the galaxy of documents. I could almost feel the gravitational attraction of the important documents.

Over the next 10 years as computer processing power grew from the PDP-12 to the PDP-11 to the DEC VAX computers (wow 4 megabytes of virtual memory space for a program and 60 megabyte hard disks), I would periodically do a midnight coding project to try and bring my hallucinations from 1968 into reality. Nice idea but there was never enough algorithms, CPU power, or memory. And there were precious few electronic text sources available to actually index unless I wanted to type them in myself.

As I became a manager and began to acquire research budgets, I would squirrel away a little money each year to see if the technology was ready to tackle the vision. The technology was never ready and there was relatively little research into the indexing and display of document collections until the early 1990s. The other side of the coin was that there was no clear idea of the business value of such a tool. We’d use these prototypes to try and impress internal funders to create some larger research projects. But nobody ever funded us beyond the prototypes.

During this time I hooked up with Russ Ackoff of the Wharton School at the University of Pennsylvania. One of the many “idealized designs” that he worked on was a distributed National Library System that he published a book about. This design called for all the texts to be in electronic format and available for searching. A key feature of the system was to generate “Invisible Universities”. That is, using the reference lists of published papers and books, find out who references whom. This system could then create influence diagrams of idea evolutions. I was really hooked then on the possibilities.

One of the many reasons I joined Primus a couple of years ago was to bring this vision to reality using the Primus Knowledge Engine as a foundation. We even licensed the Inxight ThingFinder software to help us do the indexing we needed to automatically author “solutions” for our knowledge base. We got started but it became clear that we had no visualization talent within the engineering department and no clear idea of the business driver for such a technology.

Which brings us to Preston Gates and Ellis (now K&L Gates) and Attenex. Thanks to Marty Smith who connected this semantic indexing and visualization with the electronic discovery problem, we now had the baseline tool to see the dream come true. Thanks to the efforts of Eric, last week we were able to connect the indexing capabilities of Microsoft tools so that we could inhale MS Office documents into the document analysis tool and generate concepts from Word, Powerpoint, Excel, HTML, and Adobe PDF documents. Then, we were able to load an Attenex Patterns Document Mapper database with my research papers from the last several years about customer profiles, document visualization and knowledge management.

Then Kenji and Dan figured out how to cluster long documents and normalize the frequencies of the concepts. And Lynne added the final layer of being able to add a document viewing window for the multiple formats along with cleaning up the interaction with the concept window panes on the right side of the Patterns display.

At 5PM yesterday, I saw my 30 year dream come alive. I was able to display my research papers. I navigated around the clusters and the concepts. And then when I selected a document, whether it was MS Word or a PDF, up it would pop in its own document viewer. Unbelievable. The only thing missing is the ability to index the books that I have in my home library.

But synchronicity strikes again. Just this week, Amazon.com started selling electronic versions of the popular management texts that are a core part of my library. They come in either Microsoft reader or Adobe eBook format. I quickly bought ebooks in each of the formats to see if we could index them. Of course they are protected from that. So close, so far. But then it occurs to me, books are intellectual property. I bet that someone in the Intellectual Property Practice at K&L Gates was involved in negotiating the licenses for some of the book properties. Sure enough several folks in the group were. So hopefully the last step in the journey of the dream is close at hand, the ability to not only pour my own writings and email, research reports, and published papers into the Attenex Patterns document database, but we can also get full length books indexed.

Now I will be able to SEE the idea and concept relationships between all these wonderful publications that I can only fuzzily keep in my human memory today. I can’t wait to glean new insights as I index more documents and as I use the re-cluster on anchor documents to see relationships I’ve never been able to see before. I look forward to being able to publish meta-data about a corpus of documents and open up a whole new field of Document Mining.

As a researcher, teacher, and business person, yesterday was the happiest day of my professional life. My heartfelt thanks to all of you who’ve helped bring these concepts to life.

Katherine, you commented about authoring the Patterns story in an iPad version that the reader could add to in interesting ways, reminded me of this slide – my content, our content, their content.

In working with clients the last couple of weeks I’ve been adding to the above and then making it a mirror image with the author on one side and the reader on the other.

The author’s side goes something like this:

- Collect

- Annotate

- Curate

- Distribute

- Engage – in the fullest sense of social media and Cluetrain Manifesto

- Recycle

The reader’s side goes something like this:

- Collect

- Understand

- Relate to current situation

- Relate to other information and signals I’m getting

- Engage

- Act on the information

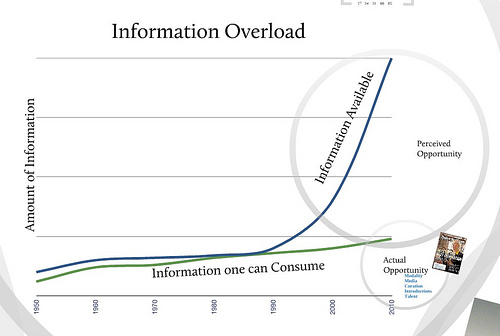

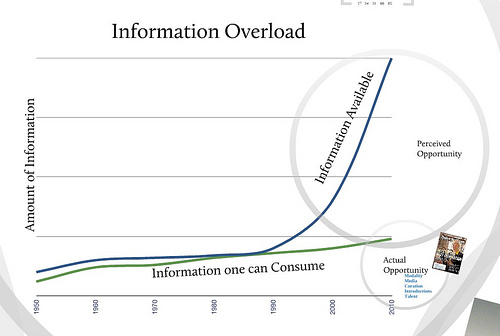

The Aggregage website looks at this phenomena from a marketing sense and then lays out a number of tools that help marketers cope with information overload.

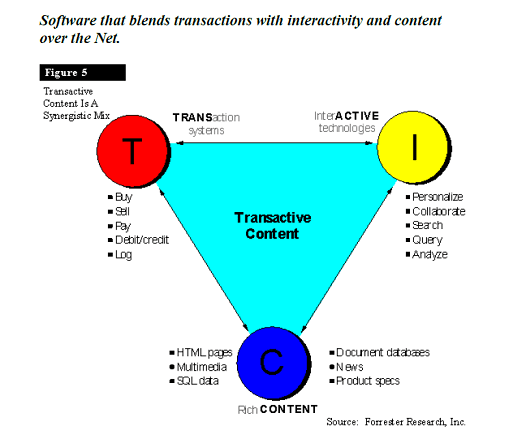

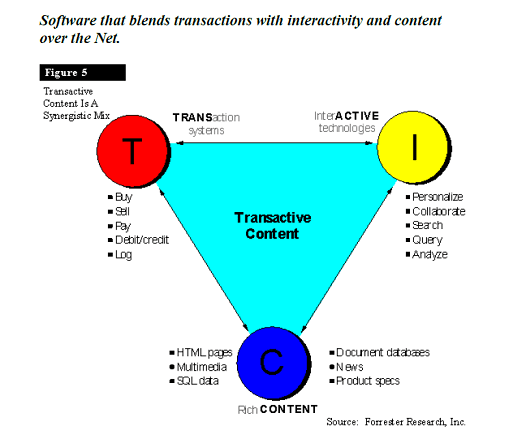

Another term for this is transactive content:

The last step is monetization or what are the ways that you can monetize the content. While these methods are somewhat specific to social media they provide a good range of the monetization models open to you:

Top 10 Monetization Trends for Social Media and Microcommunities

“When it comes to savvy, proven, and incredibly successful tech investors, Ron Conway is a legend. He has a gift or an uncanny sense of shrewdness, or a fusion of both, to identify the real opportunities that will transform into successful exits and also fuel and inspire aggressive innovation in the process.

“To help entrepreneurs, startups, and industry leaders capitalize on the tremendous opportunity that social media presents, Conway offers his vision for the top 10 ways to monetize real-time conversations:

- Acquiring followers

- Advertising – Context and display ads

- Syndication of new ads

- User-authentication; verifying accounts

- Commerce

- Payments

- Enterprise CRM

- Analytics; analyzing the data

- Coupons

- Lead generation”

Even the original vision for Attenex had the two pieces I felt were critical to a viable company – the authoring piece (Attenex Structure) and the reviewing/discovery piece (Attenex Patterns). While they were sort of an integrated whole in my head, they always appeared as two separate pieces to everyone else. Again, another thread of ideas dropped in the process of business focusing. It really is the combo of Structure and Patterns and then going way beyond with what devices like the iPad allow for.

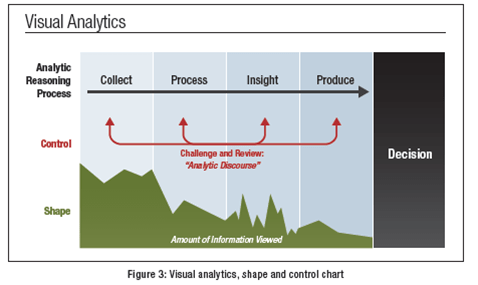

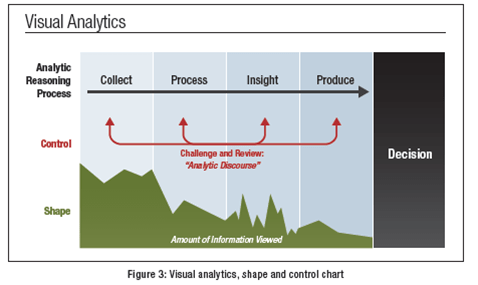

As a result, I never have seen Patterns as an authoring tool – it’s a discovery tool. Even our core slide on what visual analytics means (courtesy of Sean McNee) has no authoring component to it.

So the Tom Sawyer part of me wants to kick this off by getting all of the participants in Attenex Patterns over the years to contribute to a Wiki like environment to begin the profiling.

- Employees

- Preston Gates Contributors – the board, Kim Church and her IT crew

- Customers – Jones Day …

- Channel Partners – FTI, SPI, Forensics Consulting, KPMG, Strategic Discovery

- Competitors – Applied Discovery, Dolphinsearch, Stratify, Recommind …

- Consultants – George Socha (EDRM), Geoff Bock, Patrick Inouye (Attenex patent attorney) …

- Influencers – Monica Bay at Legal Technology News, Sedona Group, Judges (Schira Schindlin)

In an ideal world these folks would also become part of the startup community to enhance the personal patterns.

As part of joining the network, each person must profile himself/herself (setting up data for the template use or predictor for your stuff):

- Meyers Briggs Score

- Social Styles Score

- Educational Background

- Work experience (pointer to LinkedIn profile)

- Number of years involved with eDiscovery

- Role in relationship to Attenex Patterns

- Authored Stuff

- Favorite memory of Patterns

- Tell me a story of your involvement

- What role did the product play in your life

- Key events in time

- Artifacts

- People photos

- Screen shots

- Important emails

- Use Cases

The above information would be used to build out the content as well as create the fodder for the semantic networks, social networks, and event networks. The meta tags for each of the authored content giblets and artifacts would place the content into the appropriate cluster or spine. And unlike Patterns we would allow content to be in multiple clusters.

From our conversation last night, what really hit home is the comparison in eDiscovery between linear review and what we created with Attenex (conceptual review and now automated review – predictive coding). I hadn’t made the leap from the linear book to the conceptual book or resource or dynamically mapped content or maybe the key term content in context.

And then the other things you suggested were to start the iPad App out with the story and then go through layers (overlaid by):

- The” Make Sense of My Stuff” – ability to add your stuff to the core book (like Tableau Public)

- For my own learning

- To see patterns across lots of other authors’ work

- To profile the project much like we are profiling the contributors

Now the next set of thinking is to follow through on the thread of how this transforms – reading and writing (in the fullest sense of multiple mixed media – text, photos, video, audio, simulations …), learning, and publishing. It is learning for the Facebook generation.

This “content in context” meme is clearly in the air. Both Amazon with their new Kindle Publishing format and Apple with their iPad iBooks textbooks announcement are creating more flexible formats for thinking outside the linear book. Easy to use toolsets are emerging from Vook and Pugpig.

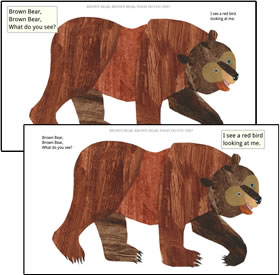

Amazon Children's book example

The Wall Street Journal weighed in with their “Blowing Up the Book” article on the new eBook formats.

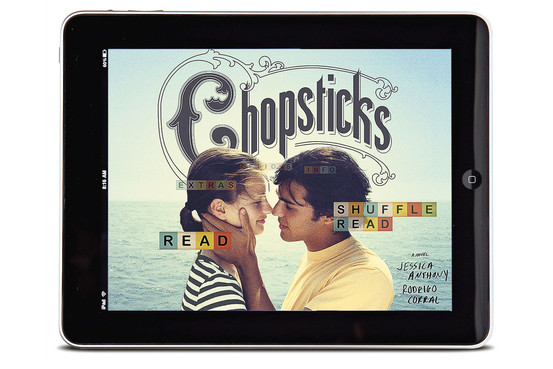

The Novel remixed: Chopsticks Children's book

So Katherine, many thanks for knowing me better than I know myself, and pointing me in a new “legacy” direction.

Peace,

Skip

One of the problems with powerful ideas and paradigm shifts is once they get in your mind you now see the world through that lens. While meeting with Duke Professor Kate Hayles recently, she kindly shared several chapters of her new book How We Think: Digital Media and Contemporary Technogenesis. As I flew back to Seattle I immersed myself in these Adobe PDF chapters on my iPad. I loved the insights and implications of what she was describing for the new forms of literature in digital media. I particularly liked her pointers to the Digital Humanities Manifesto 2.0 to describe the first two waves of the new field:

“Like all media revolutions, the first wave of the digital revolution looked backward as it moved forward. Just as early codices mirrored oratorical practices, print initially mirrored the practices of high medieval manuscript culture, and film mirrored the techniques of theater, the digital first wave replicated the world of scholarly communications that print gradually codified over the course of five centuries: a world where textuality was primary and visuality and sound were secondary (and subordinated to text), even as it vastly accelerated the search and retrieval of documents, enhanced access, and altered mental habits. Now it must shape a future in which the medium‐specific features of digital technologies become its core and in which print is absorbed into new hybrid modes of communication.

“The first wave of digital humanities work was quantitative, mobilizing the search and retrieval powers of the database, automating corpus linguistics, stacking hypercards into critical arrays. The second wave is qualitative, interpretive, experiential, emotive, generative in character. It harnesses digital toolkits in the service of the Humanities’ core methodological strengths: attention to complexity, medium specificity, historical context, analytical depth, critique and interpretation. Such a crudely drawn dichotomy does not exclude the emotional, even sublime potentiality of the quantitative any more than it excludes embeddings of quantitative analysis within qualitative frameworks. Rather it imagines new couplings and scalings that are facilitated both by new models of research practice and by the availability of new tools and technologies.”

Yet, it was with great pain that I read Hayles in the “old school” long form book in the new digital iPad medium. Every paragraph pointed to interesting sounding research. Now I was going to have to wait until I was back at my desk to chase all these links to go to the source material. Along with what Kate was describing I really wanted a new form of Attenex Patterns so I could view these documents and concepts in relationship to each other – through semantic links, social network links and event networks.

Social Network and Semantic Network Views in Attenex Patterns

I just wanted to scream as I realized the “purgatory” I am now in between the old school and the awaited next generation digital media.

As I finished up with Kate’s chapters, I turned my attention to David Weinberger’s latest book Too Big to Know: Rethinking Knowledge Now That the Facts Aren’t the Facts, Experts are Everywhere, and the Smartest Person in the Room is the Room. Imagine my continued pain when I came across this passage from Weinberger:

As I finished up with Kate’s chapters, I turned my attention to David Weinberger’s latest book Too Big to Know: Rethinking Knowledge Now That the Facts Aren’t the Facts, Experts are Everywhere, and the Smartest Person in the Room is the Room. Imagine my continued pain when I came across this passage from Weinberger:

“I am aware that it is at best ironic, and at worst hypocritical, that I have written a long-form book, available only on paper (or on paper’s disconnected electronic simulacrum), that is arguing for the strengths of networks over books. My apology is of the unfortunate sort that does not justify the action so much as humiliate the perpetrator. And so: I am sixty years old as I write this, and am of a generation that takes the publication of a book as an achievement—my parents would have been proud. It’s also not irrelevant to me that book publishers still pay advances. Beyond these primordial and pathetic motivations—seeking money and Mommy’s approval—there are some other factors that mitigate the irony. I’m not saying “Books bad. Net good.” The privilege of holding the floor for the length of 70,000 words can allow ideas to develop in useful ways; if this book spends more time discussing networks than books, it’s because its author assumes that the case for books is made implicitly by every schoolroom with bookshelves, every paragraph of flap copy, and every public library. Further, for the past fifteen years I’ve been working in a hybrid mode that is not inappropriate to the transformation we’re living through: I have been out on the Web with the ideas in this book since before the book was conceived, and have profited greatly from the online conversations about them. (Thank you blogosphere! Thank you commenters!) Still, not only is the irony/hypocrisy of this book inescapable, it is so familiar in this time of transition that I wish someone would write a boilerplate paragraph that all authors of nonpessimistic books about the Internet could just insert and be done with.”

While I continue to enjoy Weinberger’s long form book and the transform he enables of my understanding of knowledge, the echo of Katherine James Schuitemaker’s plea reverberates in my mind:

“Skip, free us from the tyranny of the linear book!”

It is long past time to go build some innovative software once again – my life pattern that repeats.

One of the great entrepreneurs of the 20th Century died in 2011 – Ken Olsen who founded Digital Equipment Corporation (DEC). For 23 years, Gordon Bell served as the Executive Vice President for Research and Development (both hardware and software) working closely with Ken Olsen to generate innovative hardware and software systems. I had the privilege of learning from both men during the years at DEC when I was building ALL-IN-1. One of the joys of being on email in the early 1980s was getting messages like the following from Gordon Bell. It is a tribute to Gordon that most of these recommendations are as fresh today as they were thirty years ago.

One of the great entrepreneurs of the 20th Century died in 2011 – Ken Olsen who founded Digital Equipment Corporation (DEC). For 23 years, Gordon Bell served as the Executive Vice President for Research and Development (both hardware and software) working closely with Ken Olsen to generate innovative hardware and software systems. I had the privilege of learning from both men during the years at DEC when I was building ALL-IN-1. One of the joys of being on email in the early 1980s was getting messages like the following from Gordon Bell. It is a tribute to Gordon that most of these recommendations are as fresh today as they were thirty years ago.